A part of FEV Group

Your requirements are already behind. AI can close the gap.

Author -

FEV.io

Published -

Reading time -

7 mins

A part of FEV Group

Author -

FEV.io

Published -

Reading time -

7 mins

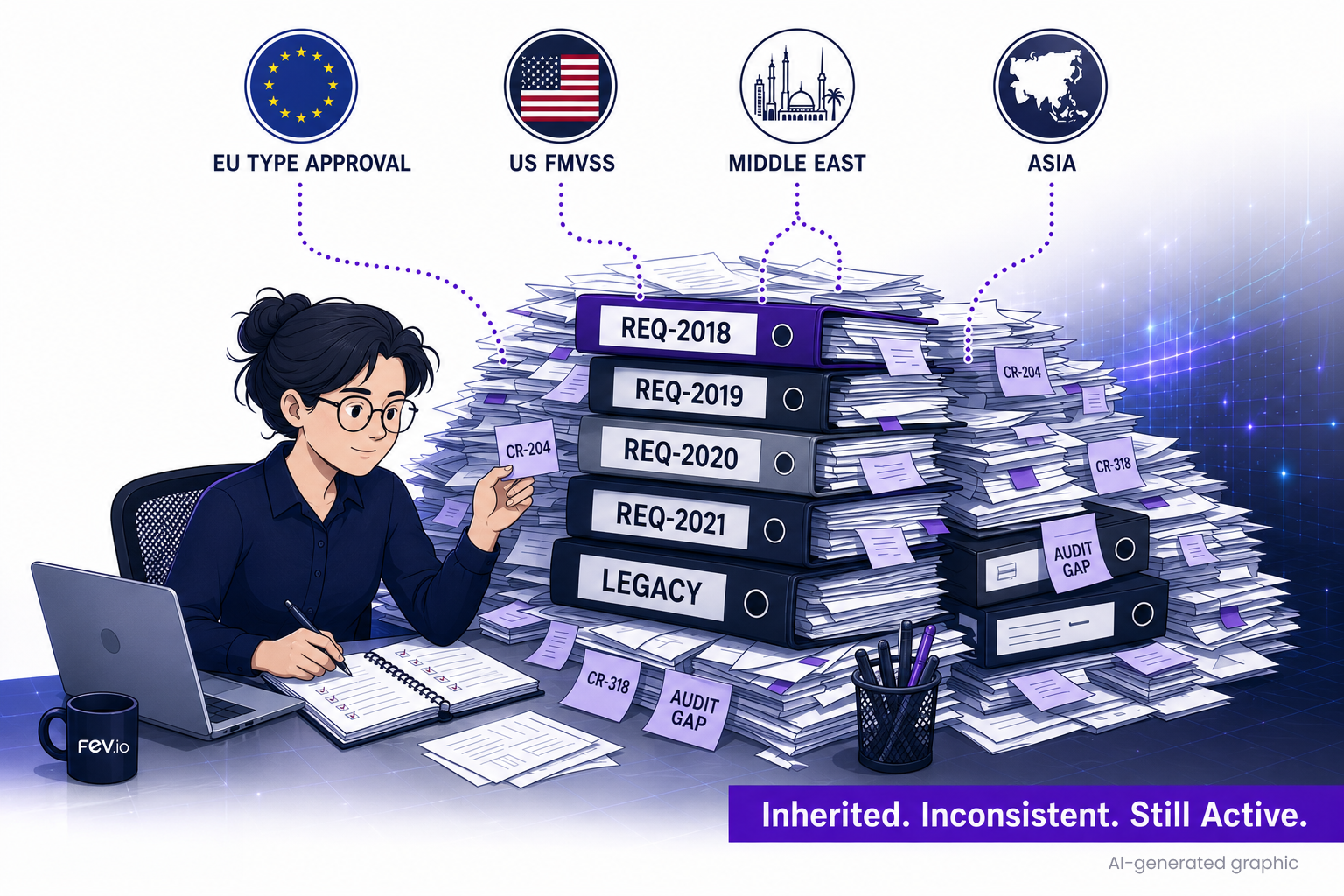

Six months ago, the specification was nearly finished. Then a regulation update landed. Three change requests came in over the same week. A new product variant got added to the program. The traceability audit found gaps everyone had been planning to fix “soon”. The backlog grew faster than anyone could close it.

If you work in requirements engineering for regulated products, whether automotive, industrial, defense or energy, you have probably lived this. The bottleneck is throughput, not tooling.

Most programs don’t start from scratch. They inherit a requirements base. Sometimes a few hundred items, sometimes tens of thousands. Some have been updated for the new program. Many have not. Some still carry assumptions from regulations that have since changed.

Type-approval rules in the EU. Federal safety standards in the US. The compliance frameworks taking shape across the Middle East and Asia. They rarely line up, and every market adds its own obligations. Each one has to be traced down through system and component levels. Meanwhile change requests pile up faster than they get worked off, contradictions slip in between requirement layers where nobody is looking, interface names diverge from what the architecture actually defines, and the rationale and verification criteria nobody had time to update last quarter are still missing this quarter.

The team knows what good looks like: clean specs, audit-proof traceability, no silent contradictions between layers, rationales and verification kept current. Volume is the problem. They spend more time maintaining documents than making engineering decisions.

None of what follows is theoretical. These are jobs running every day inside live engineering programs.

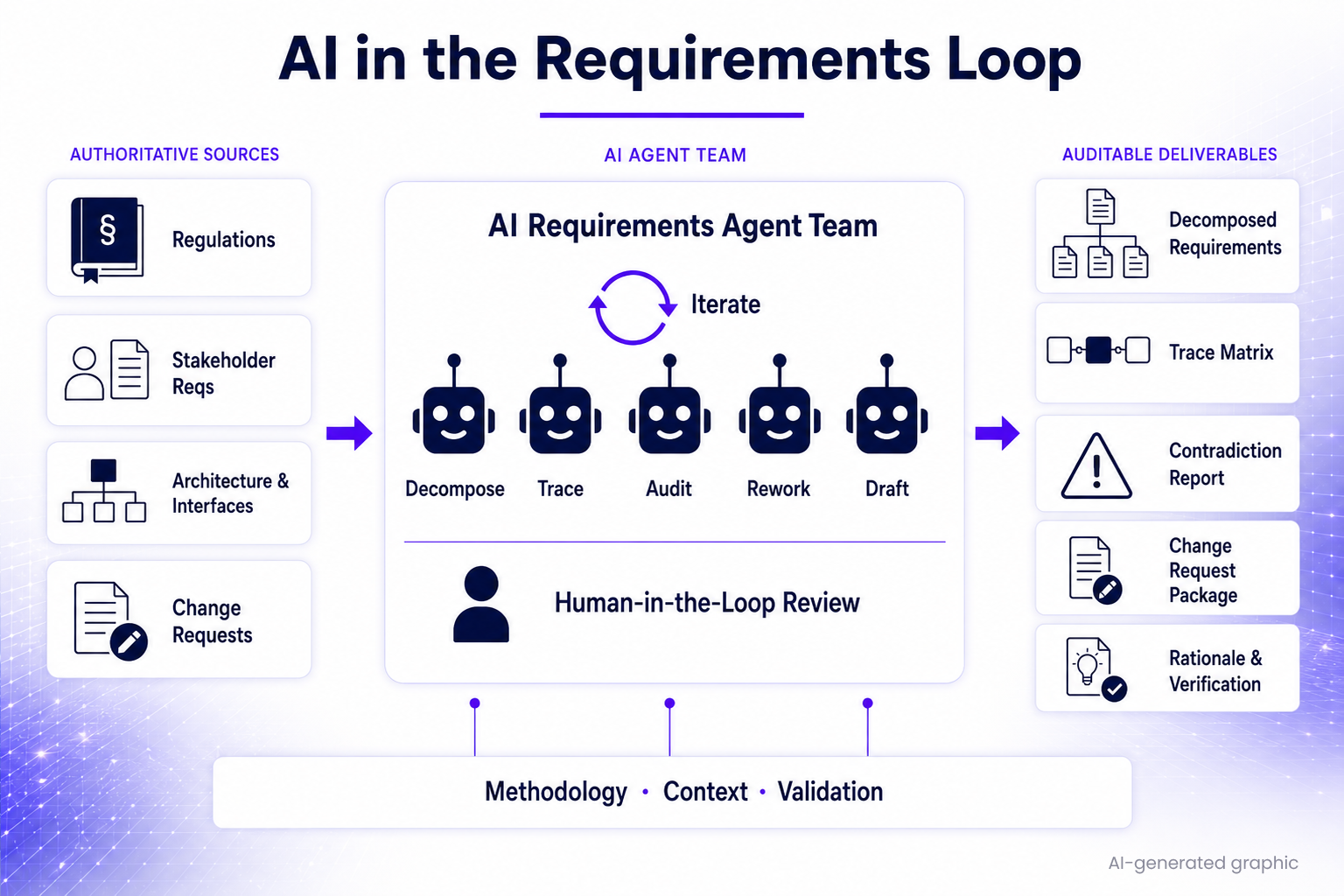

Regulatory decomposition. Take a UN regulation or a national standard. The AI walks through the clauses, picks out what is applicable, builds testable obligations at the system level, then decomposes them down to component-level requirements with traceback to the source clause. Days of team work comes out drafted within hours.

Quality rework at scale. Every requirement in the set, whether the set is two hundred items or twenty thousand, gets classified: reuse, update, retire. Each one is then checked against the project’s own style guide, the naming conventions, the testability rules. The whole set, not only the requirements that happen to be in scope this cycle.

Traceability and coverage. Stakeholder requirements get traced down through system and component levels into implementation, gaps are surfaced, and the trace matrix comes out as a side-effect. Every link is sourced and auditable.

Contradiction and consistency. The AI pulls behavioral models and transition logic out of specification prose, then looks for what doesn’t add up. Contradictions between requirement layers. Ambiguous wording. Missing edge cases. The issues that normally hide until integration testing surface during specification review instead.

Change requests. When new gaps come in, the AI drafts the change request package: affected items, proposed wording, impact across the requirement tree. The engineer reviews, edits, submits.

Rationales and verification. The “why” behind each requirement, the verification method, the acceptance criteria stay current. They get updated whenever the requirement they belong to changes, instead of the usual situation where they are three revisions out of date.

Across all of these, the labour split is the same. AI does the volume work and the cross-checking. The engineer makes every judgement call. Nothing reaches the customer without engineer sign-off.

This is also where most AI initiatives quietly fail.

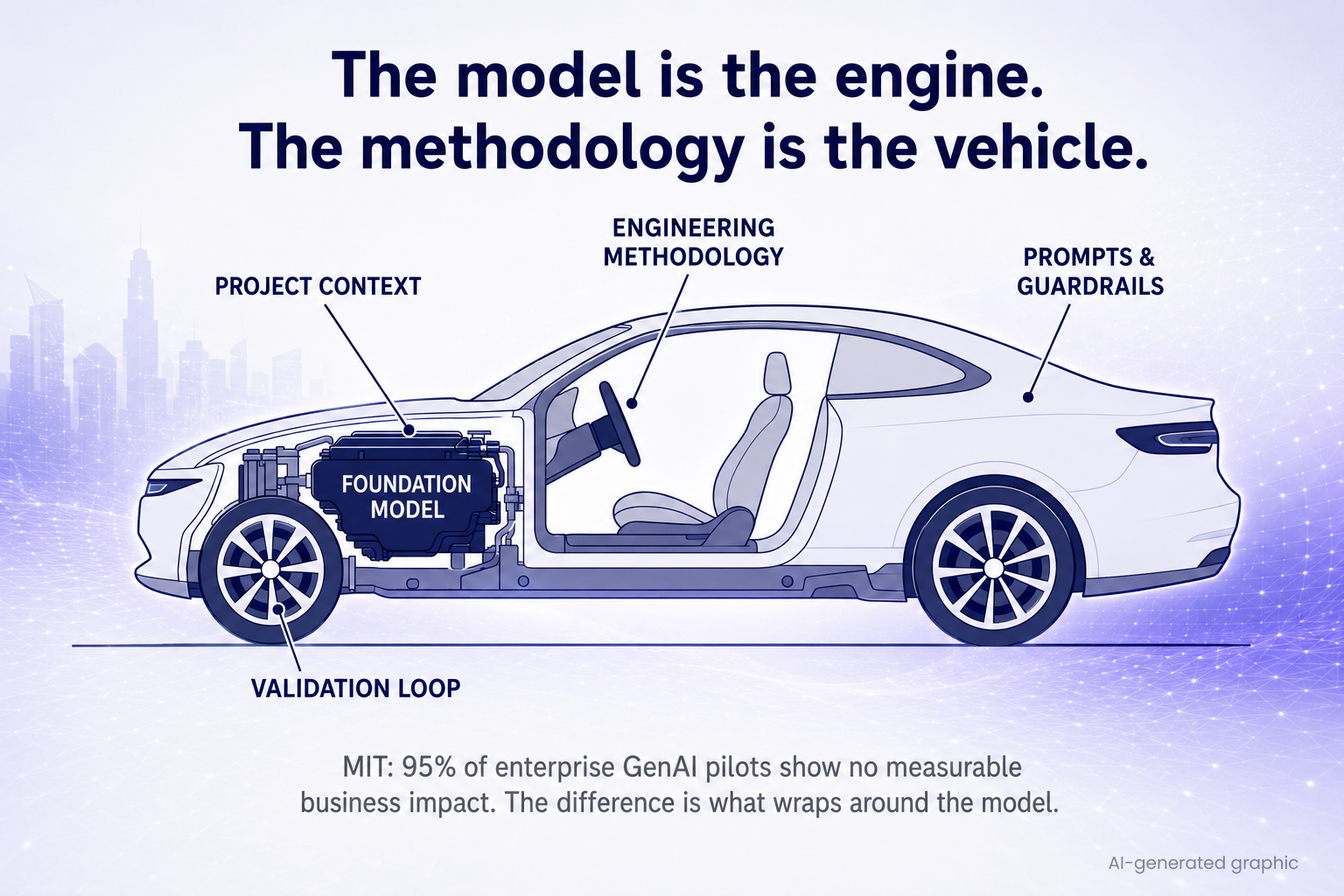

MIT research shows that 95% of enterprise GenAI pilots produce no measurable business impact. That is not because the models can’t do the work. Today’s foundation models, including the European-hosted, TISAX-compliant deployments we run customer projects on, have the analytical and linguistic capability that requirements engineering needs.

We’ve measured this directly. At REConf 2025, our team published a comparison of two LLM approaches to requirements review and generation against industrial datasets. Most of the generated requirements were rated correct, useful, and an improvement over the baseline by the human reviewers. In most cases, though, the engineer still had follow-up work to bring them to release quality. The capability was clearly there. The value lived in the methodology around it. A year of running these workflows in active customer programs since has only sharpened that picture, and the methodology has matured with every project.

What separates the deployments that work from the pilots that don’t is everything around the model. Project context that is actually relevant to the question being asked. Prompts written by someone who has done the engineering work themselves. A validation loop that catches the wrong answers before they propagate. The discipline to correct the AI once and have that correction stick across every requirement level it touches, not just the one you happened to notice.

The AI is a power tool. Whether it makes anything depends on who is holding it.

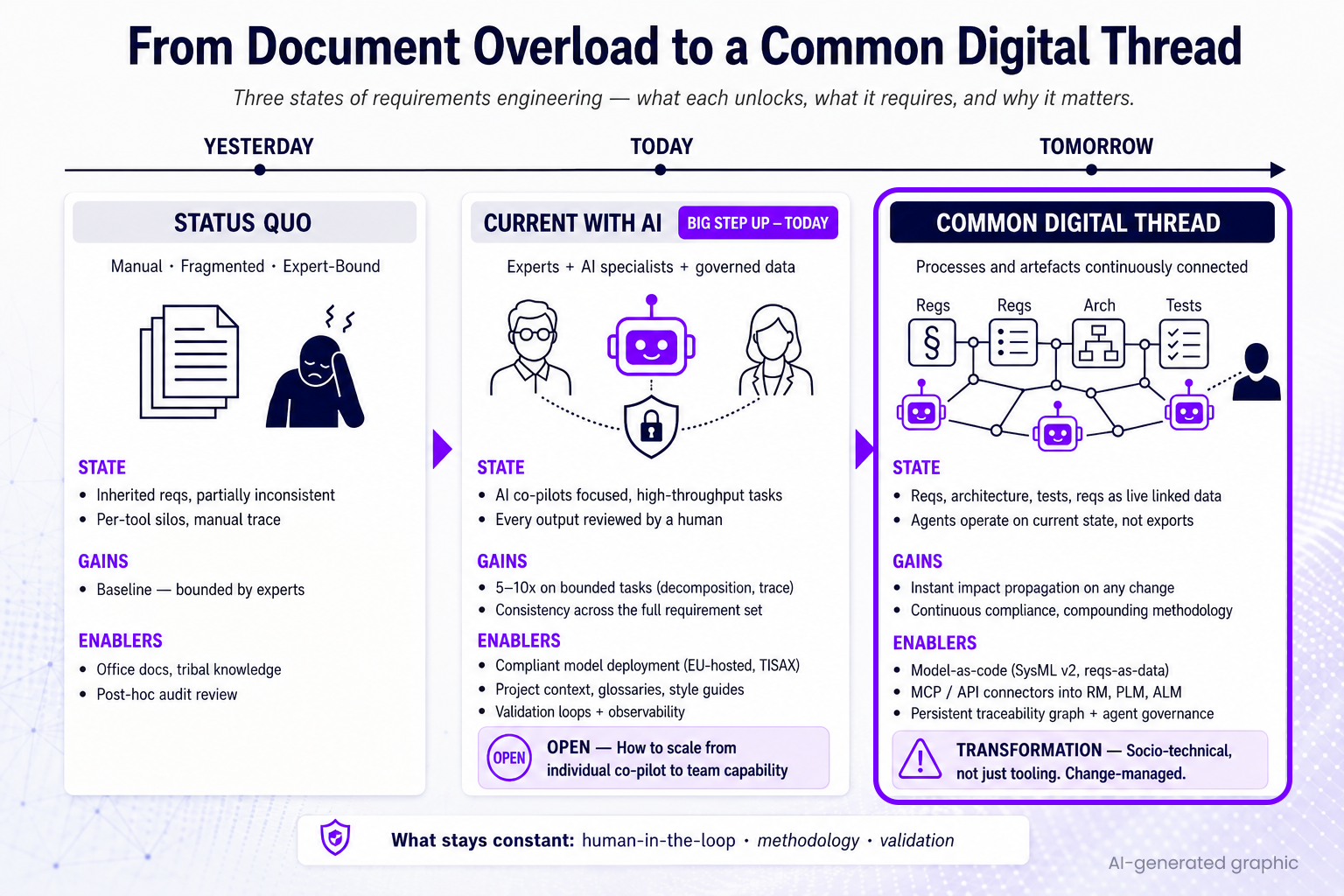

AI delivers at any level of integration. You can drop it next to your existing toolchain with very little setup and still get value. The deeper it can reach into authoritative sources, though, the better it gets. Working off a stale export, it is slower and it misses things. Reading the live state of requirements, architecture, interfaces and signal definitions, it catches more, traces more, drafts more accurately.

Today’s state is already a big step up on manual. Domain experts paired with AI application specialists, working under governed data access, with compliance, project context curation, validation loops and observability all in place. On bounded tasks like regulatory decomposition and trace generation, the time compression is roughly 5–10x. The real challenge: how this becomes a consistent team capability across many programs, rather than a single engineer’s productivity boost.

What is coming is a common digital thread: requirements, architecture, interfaces, regulations, change requests, verification evidence sitting in one connected, machine-readable graph that agents read and update continuously. Reaching it is a socio-technical change. Engineering organizations are right to be careful about change, so deeper integration gets earned step by step. You start lightweight, deepen as trust grows. Through every level of integration, the basics stay constant: human-in-the-loop, working methodology, validation discipline.

There is a compounding dynamic that makes early adoption pay off disproportionately.

Teams that start now build up playbooks: reusable, documented ways of handling the recurring work, getting better every project. When an engineer corrects the AI’s reading of a regulatory phrase, that correction propagates to every level and feature where the same phrase shows up, immediately. The methodology improves with every cycle, automatically.

The gain isn’t one-off. The capability builds on itself. A team that starts now will, a year from now, have accumulated methodology and consistency and speed that is hard to copy from the outside. Every new regulation, every product variant, every change cycle widens the gap between teams that have invested and teams that haven’t.

AI will be part of requirements engineering. The only thing to decide is whether you build the capability now or have to catch up to it later.

FEV has been running AI agents inside live requirements engineering programs for some time now, in regulated industries where the work has to stand up to audit. They are not pilots. They are working engineering tools producing review-ready, process-compliant deliverables.

We have the practical experience: how to activate compliant models for requirements work, what to feed them, how to structure the validation loop, how to scale the methodology across teams. That is available now.

If your requirements team is spending more time managing artifacts than engineering solutions, let’s talk.

If you want to accelerate your requirements work, get traceability that holds up under audit, or roll out AI-assisted workflows across more programs, get in touch.

Get in touch with us at: solutions@fev.io